How to Think About AI Risk and Bias

Stop placing responsibility for AI only at the top of the pyramid; think like you’re building a pyramid, instead.

Most people think of AI responsibility like a pyramid — it’s all on top. But the truth is, AI risk and bias management is more like building a pyramid.

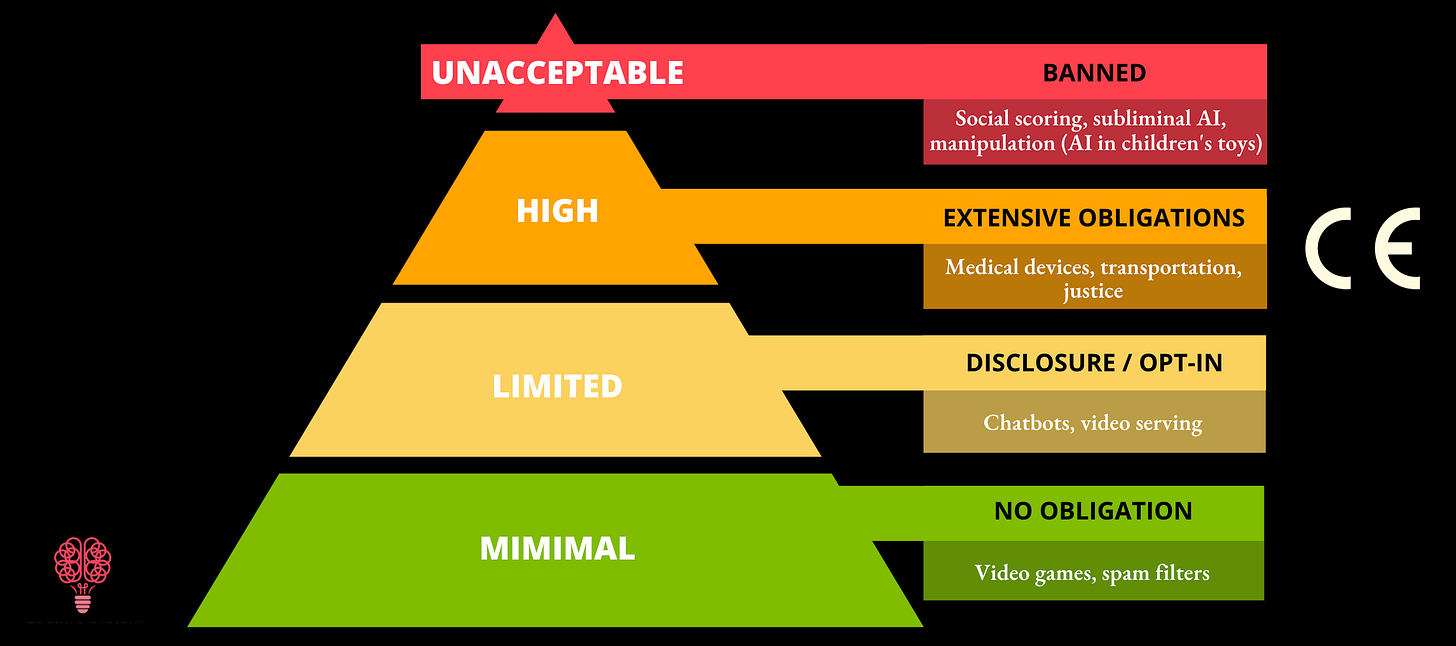

Of course, responsibility starts at the top. The EU AI Act describes the levels of AI use, from applications with minimal risk, like email SPAM filters, to those with high stakes, like using AI in medical devices or transportation systems. Minimal-use applications carry no public obligations in the EU, while high-risk applications carry extensive commitments.

But the EU AI Act triangle can send a confusing message. Executives at the top aren't solely responsible for adjudicating AI. Yes, CEOs, CDOs, Chief Risk Officers, the Googles, Amazons, and Microsofts play a significant role in safe, ethical, unbiased AI.

But those that use AI wisely view oversight more like building a pyramid than living in one. Historians estimate it took 20,000 to create a single pyramid: drafting technicians, masons, artisans, engineers, overseers… it was a massive, diverse, coordinated sea of effort, all working together.

Like our current AI age, those in the pyramid age created new tools on the fly.

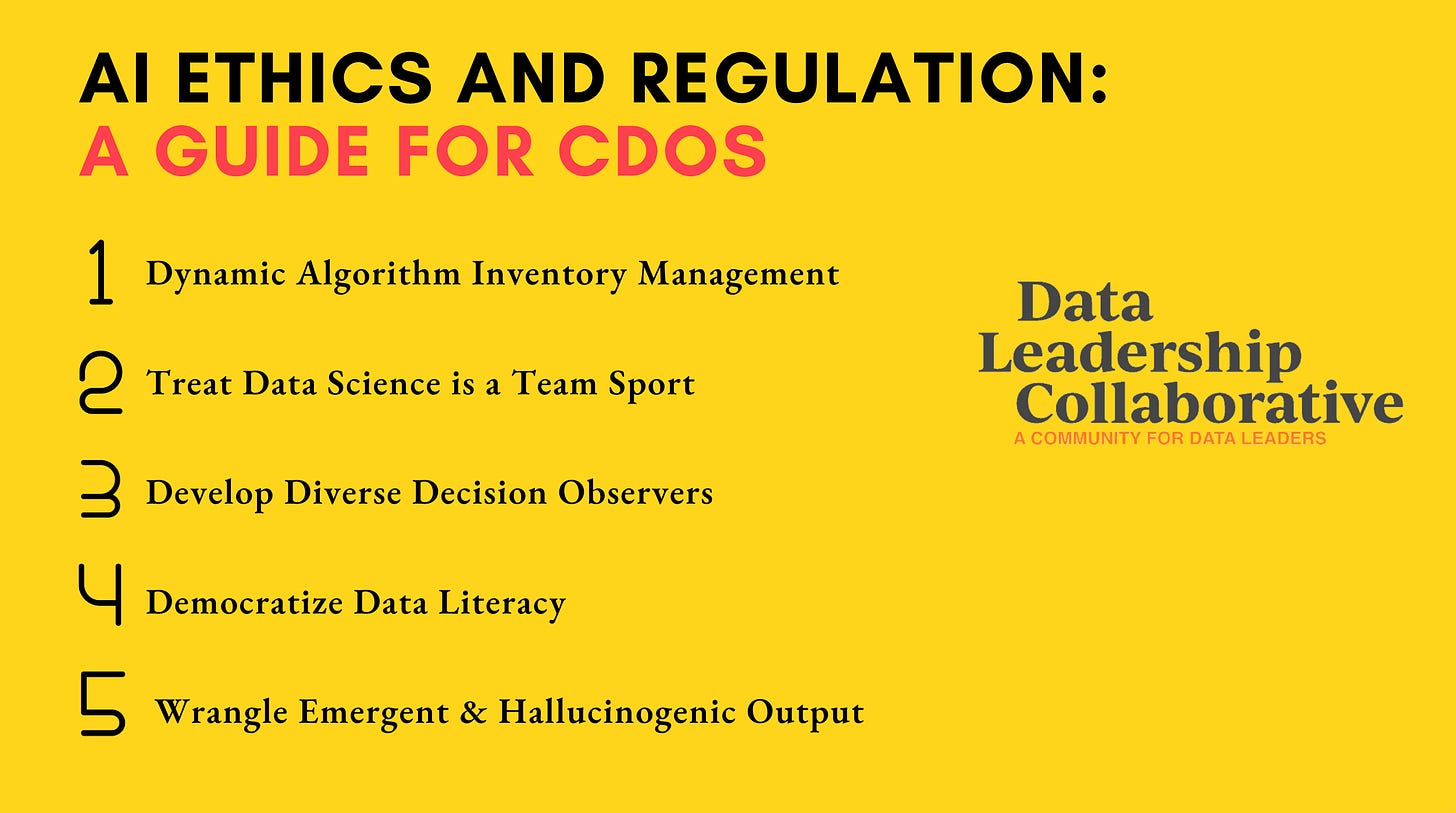

My recent article for the Data Leadership Collaborative, AI Ethics, and Regulation, a Guide for CDOs, explores five ideas to help leaders think like a pyramid builder about AI.

They are,

Dynamic Algorithm Inventory Management

Treat Data Science is a Team Sport

Develop Diverse Decision Observers

Democratize Data Literacy

Wrangle Emergent & Hallucinogenic Output

Dynamic algorithm inventory management, or simply cataloging where AI is used, may seem like basic hygiene but is increasingly complicated with the rise of generative AI. Tools like GPT are uniquely tricky to manage because their predictions are, by their very nature, dynamic. Leaders should consider changing the cadence of how frequently they assess the observations, content, and insights algorithms generate and use.

Treating data science as a team sport is a principle espoused by many, from pyramid builders to Pulitzer Prize-winning behavioral economist Daniel Kahneman in Noise: A Flaw in Human Judgment. But how do we nurture AI teamwork? From a behavioral economics point of view, Kahneman raised the importance of collaboration based on research that shows that humans can't spot their own biases, so only teams can identify and mitigate the actions of AI.

Kahneman introduces the idea of Decision Observers, or folks with humanist and data skills needed to interpret bias and risk. Decision Observers ask more profound questions and consider AI's unintended implications, actions, and ethics. The introduction of Decision Observers is habit three, "Develop Diverse Decision Observers."

Like building a pyramid, AI governance takes thousands of people, not just a few at the top. "Democratize data literacy" is the imperative to provide data and AI literacy more broadly. For example, Dr. Andy Moore of Bentley Motors uses karate Dojo levels, from White Belt to Black Belt, to track and encourage data literacy throughout his organization.

Finally, "Wrangle Emergent Behavior and Hallucinations" is suddenly important as firms rush to deploy GPT-based services in the enterprise. Some generative models exhibit mysterious "emergent" behavior hallucinations outside traditional procedural approval processes. The implications of generative AI on governance are still unfolding.

For example, emergent abilities are factors not present in smaller models but emerge in larger models. You can see this in the paper Emergent Abilities of Large Language Models, researchers at Google, Stanford, DeepMind, and the University of North Carolina explored novel tasks that LLMs can accomplish as they grow larger and are trained on more data.

The study shows that that as the size of the model reaches a certain threshold, performance jumps and improves.

These generative capabilities have profound impact on how data professionals manage and govern AI, which is why dynamic algorithm inventory management, managing data science as a team sport, developing diverse decision observers, and democratizing data literacy are so essential.

What can you do now to create a more effective culture of AI governance?

Take action on concrete ideas like hiring more Decision Observers, expanding literacy training programs, or implementing a Dojo-level scheme to encourage and motivate everyone to participate in bias and risk mitigation.

Join the Data Leadership Collaborative to follow the best practices and thinking shared by senior data and AI leaders, and

Get ready to tune into the new podcast I'll be hosting, sponsored by Correlation One, called Data, Humanized, for discussions with leaders tackling today's literacy, transformation, and governance issues.

Read AI Ethics, and Regulation, a Guide for CDOs on the Data Leadership Collaborative.